Who owns AI-generated material?

If you write a novel, compose a song, or paint a picture, this is your artistic creation. If you come up with an innovative idea, invent a new solution, or make a new scientific discovery, it's yours. Someone else cannot take credit for it. The same applies to anything you create, and this is what intellectual property laws are for — to define ownership of ideas and protect their creator.

But what about AI-generated material? The human "originator" of this material will have had some input. They may even have come up with the initial idea. But it is the generative AI solution that did the heavy lifting and turned this idea into a reality. So who owns the intellectual property here?

This is a difficult question, and one that international legislation has not yet got to grips with. Law firm Norton Rose Fulbright offered some possible answers to this conundrum:

- The owner of the AI system may have legal ownership of the created work

- Or the developer who coded the system

- Or the trainer of the AI system

- Or the operator of the AI system

- Or "some permutation of the above"

You'll notice the fairly vague language used here. The truth is, we're still lacking a strong set of legal definitions for AI intellectual property. The US Copyright Office has suggested that AI-generated works should automatically be placed in the public domain, but this solution may be too simplistic to apply in all cases.

Can an AI-generated mimic infringe copyright?

In some instances, AI is not just used to generate a work — it is used to directly mimic a human artist or another creator.

This has sparked a high-profile debate recently, as platinum-selling recording artists like Billie Eilish and Nicki Minaj put their signatures to a letter calling for tough measures against "predatory" AI. In a world where AI tools can create deepfake versions of pop stars, many are afraid this will "infringe upon our rights and devalue the rights of human artists."

The legal and ethical implications of this are difficult to untangle. Should individuals use AI tools to create soundalikes of already-popular artists from an ethical point of view? The obvious answer is no, although human artists have certainly profited by sounding a bit like their more successful counterparts in the past.

Legally, however, the question becomes more difficult. Currently, there is very little that can be done to stop individuals using this deepfake method. Universal Music succeeded in forcing the removal of a work that mimicked the label's own artists, but this was through a petition to streaming services, so it has no legal precedent.

As tools become more powerful, regulatory bodies need to find a way to resolve this.

Is generative AI systematically infringing copyright?

The fundamental issue at the heart of all debates on intellectual property and artificial intelligence is this: generative AI cannot exist in isolation.

A GenAI tool can't just conjure something out of nothing. It must be trained on vast sets of existing data, gather understanding and knowledge from this data, and then apply this in the generation of material.

Immediately, this raises an important question. Is all generative AI infringing copyright — even if there is no deepfake mimicry involved?

Many would argue yes, it is infringing copyright. In 2024, an OpenAI researcher named Suchir Balaji went public with his fears after leaving the company. Speaking in October, Suchir described how he felt OpenAI was violating copyright law and damaging the internet. In an extremely tragic footnote to this story, Suchir Balaji died the following month — authorities believe he took his own life.

Suchir is not the only accuser of OpenAI. In December 2024, Indian news agency Asian News International brought a lawsuit against the firm, stating that the firm's ChatGPT software was infringing its copyright, falsely attributing material, and improperly using content.

This is not the first time OpenAI has faced an AI intellectual property challenge. In 2023, The New York Times sued OpenAI on similar grounds, describing how ChatGPT was using its material illegally.

The outcome of both cases will be very interesting, as OpenAI has previously stated that it is impossible to create bots like ChatGPT without copyrighted material. The legal future of generative AI — at least in its current form — changes in the balance.

The next steps in AI intellectual property law

Right now, it appears we have plenty of questions and not many answers. But those answers will come — they have to. AI is not going away, so legislators and policymakers need to develop structures that can adequately contain it while still realising its benefits.

Victor Adames of BCB Law & Business is optimistic for the future. He believes that "collaboration between policymakers, legal experts, industry stakeholders, and academia" can help develop "equitable solutions that balance the interests of creators, users, and society at large."

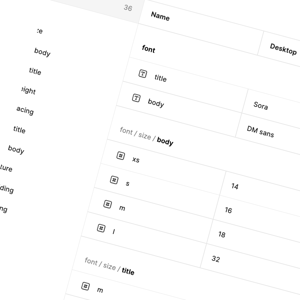

To make this happen, however, we will need a more concrete set of definitions and parameters. In an article for HG Lawyers, published in August 2024, Jeremy Bazley described how current terminology is becoming unfit for purpose. Terms like "independent intellectual effort," used in Australia to decide whether a work can be protected as intellectual property, "may become increasingly blurred as the technology advances."

Perhaps this is where the first steps need to be made — actually defining key terms in relation to AI and understanding what we're all dealing with. Only when we're all on the same page can we take any real strides towards solving the AI's problem with intellectual property.