The idea of AI systems communicating with human users has really blown up in recent years, as generative AI solutions and chatbots have come to the fore. But what sometimes gets forgotten is that human communication is not monolithic. Different human users communicate with one another in different ways. A Spanish user is going to communicate differently to a Japanese or Hindi speaker, for example. And so AI systems need to be able to reflect this.

Natural language processing technology is already highly capable, but in the future, it will need to expand this capability even more. It will need to understand inputs in different languages and bringing multilingual users together with the outputs it generates. This is already happening – Google Assistant is already available in 30 languages across more than 90 countries – but we're really only scratching the surface here.

The role of NLP in enabling multilingual AI services

So what does NLP need to achieve as part of an AI system? Here are a few of the key roles.

Understanding language inputs

Perhaps the simplest function of NLP in multilingual AI is language understanding. The system receives an input in one language – French, for example – and comprehends this. The system can then derive insight from the information it has received and decide on its next action.

NLP models like multilingual BERT (mBERT) and XLM-RoBERTa are trained on extensive multilingual datasets, breaking down linguistic boundaries and ensuring cross-border consistency for AI.

Translation and localisation

NLP may be used to translate inputs from one language into another, with minimal latency. So the system may receive an input in German and translate this into English, or Italian, or something else, basically immediately. The translation is almost seamless, and reading these inputs and outputs in real time is basically like reading a translated conversation.

The integration of neural translation models, like those developed by Google or DeepL, make this possible. The technology can translate conversations between human users, or it can deliver a generated output in a specific language.

Service consistency

There is more to language than just the specific meanings of individual words. GenAI service bots need to offer the same level of empathy, consideration, and sentiment to all users regardless of the language they're operating in.

Cross-lingual sentiment analysis models, embedded into the system, can make sure this is the case. These models conduct tonal and contextual analyses to ensure parity across different service regions.

The challenges of multilingual AI – and how to overcome them

Natural language processing has already overcome a number of different challenges to bring us to where we are today. For instance, analysing the context of a word to arrive at the intended meaning – the contextual difference between "the nervous system" and "a nervous disposition", as an example – was once deemed almost impossible but is now the norm.

However, multilingual AI brings with it a wealth of other challenges and problems. Fortunately, these are challenges that can be overcome.

Data scarcity

In an ideal world, we'd have the same level of data resource for all the world's languages. Unfortunately, we don't live in an ideal world – and so we have a wealth of training resources for languages like English, Spanish, and Mandarin Chinese, and comparatively few for Xhosa and Lao. This is a problem, as it immediately introduces disparities in quality and capability.

There are ways to overcome this. We can use transfer learning, training models with multiple languages in a single batch, recognising general linguistic patterns. Facebook No Language Left Behind (NLLB) project is a good example of this, using data augmentation to fill the gaps in existing resources.

Capacity issues

AI researchers sometimes talk about the "curse of multilinguality". In other words, the more languages that a fixed-size model tries to master, the lower its capacity. The model simply cannot develop enough of a specialised understanding of any single language.

To some extent, this is just a problem with scale. If we scale up the language model, its capacity for language learning increases too. But we can't scale up indefinitely. Instead, we can look to modular learning instead, where different components of the model are designed to specialise in specific languages or language groups.

Variations in script and morphologies

It's not just languages that vary – scripts too. Latin, Cyrillic, Arabic, Devanagari, Chinese, Japanese – these are just a few of the different writing systems used by cultures around the world. There are also significant variations even within the same writing system, and different processing directions. For example, Hebrew is written from right to left, while traditional Mongolian is written vertically from top to bottom. AI solutions need to be able to read inputs in these systems and also deliver outputs.

Subword tokenisation may offer a solution. Language models learn their own alphabet of commonly used word components, which they then apply during input processing and output generation. Google is spearheading improvements in this field, using a byte-level model to eliminate issues regarding scripts and symbols as part of the ByT5 project.

Tone and context consistency

In a truly multilingual environment, there will be language shifts. Conversations may pivot from one language to another, creating a complex cross-cultural system of reference and connection.

For multilingual human beings, this becomes almost second-nature. Someone who speaks Spanish or Urdu at home but speaks English or French at work can probably switch automatically between different tongues.

For an AI solution, however, this is going to be difficult. Right now, there is no definitive answer for how this can be perfected. Current research endeavours – such as the Masakhane project – are focused on honing algorithms and growing data resource in an attempt to expand this capability.

A growing list of multilingual NLP frameworks, libraries, and tools

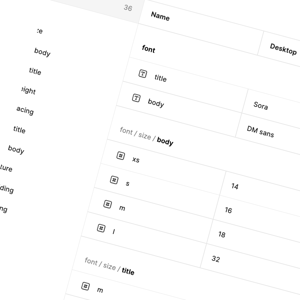

There is already a wide range of different NLP frameworks capable of supporting multilingual processing. These include:

- The mBERT and XLM-RoBERTa multilingual transformer models, each trained on more than 100 languages.

- MarianNMT and OpenNMT are frameworks designed for effective machine translation.

- Facebook's M2M-100 is designed to offer translation across 100 different languages without using English as an intermediary.

Development is supported by the following libraries, expanding the data foundation upon which multilingual generative AI is built.

- The Hugging Face library tokenises text across different languages, maintaining consistency and making multilingual NLP accessible to more developers.

- The spaCy library offers multilingual pipelines and pre-trained models for more than 12 languages.

- The Stanford NLP (Stanza) library also offers pre-trained models across multiple languages.

All of this contributes to a bright future, and an increasingly viable present, for multilingual NLP. With a rich and growing ecosystem of solutions, and with a growing focus on gathering data for traditionally underrepresented languages, advanced multilingual NLP is rapidly becoming a globe-spanning asset that is going to change the way in which we interact with one another.